GDPR Anonymization for Cross-Border Data Transfers

Post Summary

Transferring healthcare data internationally under GDPR is tricky, but anonymization can simplify compliance. Here’s why it matters:

- GDPR rules for cross-border data transfers (Articles 44–50) are strict, especially for sensitive healthcare data like patient records.

- Anonymized data is exempt from GDPR. Unlike pseudonymized data, anonymization makes re-identification impossible, removing the need for safeguards like Standard Contractual Clauses (SCCs).

- Challenges exist. Healthcare data is hard to anonymize due to quasi-identifiers (e.g., age, gender, location), which can lead to re-identification when combined with external datasets.

Key anonymization techniques:

- k-Anonymity: Groups individuals into clusters to reduce uniqueness.

- Differential Privacy: Adds noise to data to obscure individual details.

- Generalization & Suppression: Simplifies or removes data points to protect identities.

Risk assessment is essential. Tools like ARX and Amnesia help evaluate re-identification risks. Regular audits and proper documentation ensure compliance and avoid hefty fines (up to €20M or 4% of global turnover).

This article breaks down methods, risks, and tools for GDPR-compliant anonymization in healthcare.

Learn Data Anonymization Techniques for GDPR & HIPAA Compliance

sbb-itb-535baee

GDPR Anonymization Requirements

GDPR Anonymization vs Pseudonymization: Key Differences for Healthcare Data Transfers

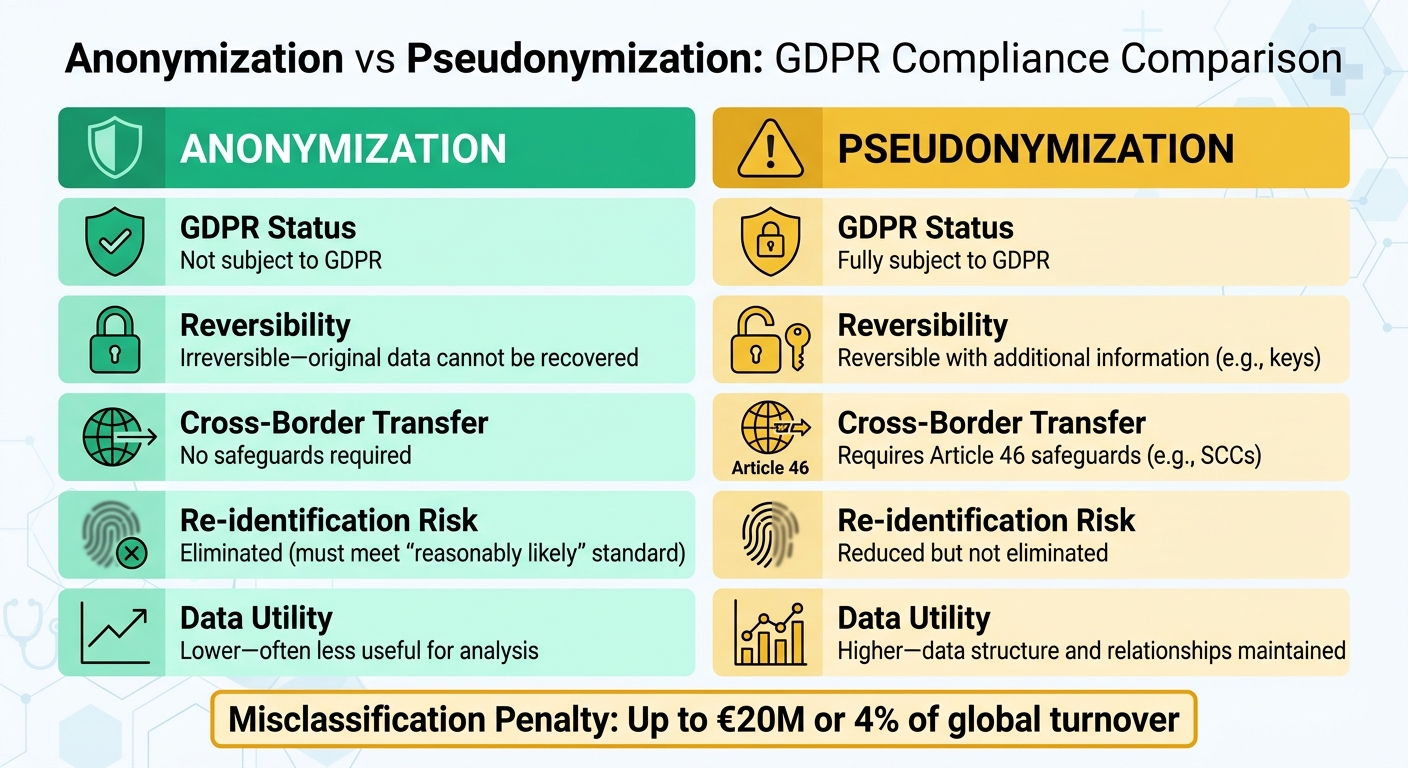

For healthcare organizations managing cross-border data transfers, understanding the distinction between anonymized and pseudonymized data is crucial. GDPR Recital 26 defines anonymization as data that "does not relate to an identified or identifiable natural person" or has been processed so that the individual is "not or no longer identifiable" [1]. In simpler terms, true anonymization ensures data cannot be traced back to an individual, making it exempt from GDPR regulations.

"The principles of data protection should therefore not apply to anonymous information, namely information which does not relate to an identified or identifiable natural person or to personal data rendered anonymous in such a manner that the data subject is not or no longer identifiable." – GDPR Recital 26 [1]

On the other hand, pseudonymization, as outlined in Article 4(5), modifies personal data so it cannot be linked to a specific person without additional information - like encryption keys or mapping tables [3]. However, since pseudonymized data is still considered personal data, it remains subject to GDPR rules, including requirements for consent and safeguards for international transfers.

The stakes for compliance are high. Misclassifying data can lead to penalties of up to €20 million or 4% of global turnover [1]. Adding to the pressure, the EDPB's 2025 Coordinated Enforcement Framework has flagged "ineffective anonymization methods replacing data deletion" as a recurring compliance issue, signaling heightened regulatory attention [3].

With this context in mind, the following sections dive into the criteria and challenges involved in achieving GDPR-compliant anonymization.

What Makes Anonymization GDPR-Compliant

To meet GDPR standards, anonymization must pass the "means reasonably likely" test. This means the data must withstand re-identification attempts, taking into account current technologies, costs, and available data sources [2]. Effective anonymization must prevent risks like singling out individuals, linking data points, or inference attacks [2].

Healthcare data poses unique challenges. Research shows that even minimal data points - like zip codes, birth dates, and gender - can uniquely identify individuals in many cases [2]. Aggregate data isn't automatically safe either; techniques like "differencing attacks", where database queries are compared with and without an individual's data, can expose sensitive information. To minimize these risks, healthcare datasets often require safe group sizes, typically between 10 and 100 individuals, to reduce the chances of re-identification [2].

Organizations must document their anonymization methods in the Data Protection Impact Assessment (DPIA). This documentation should include the chosen approach (e.g., redaction or high-entropy hashing), the rationale for deeming the method irreversible, and any residual re-identification risks [3]. Permanent redaction or high-entropy hashing are common for true anonymization, while pseudonymization methods like encryption, masking, or tokenization are used when data utility needs to be preserved [3].

Here’s a quick comparison:

| Dimension | Anonymization | Pseudonymization |

|---|---|---|

| GDPR Status | Not subject to GDPR | Fully subject to GDPR |

| Reversibility | Irreversible - original data cannot be recovered | Reversible with additional information (e.g., keys) |

| Cross-Border Transfer | No safeguards required | Requires Article 46 safeguards (e.g., SCCs) |

| Re-identification Risk | Eliminated (must meet "reasonably likely" standard) | Reduced but not eliminated |

| Data Utility | Lower - often less useful for analysis | Higher - data structure and relationships maintained |

Healthcare Data Anonymization Challenges

Healthcare organizations face a tough challenge when anonymizing data. Patient records often include combinations of quasi-identifiers - like age, gender, location, and medical conditions - that can be re-identified when matched with external datasets [4]. Location data, in particular, is notoriously difficult to anonymize effectively [4].

At the same time, healthcare data needs to remain useful for research and analysis. For example, generalizing data (e.g., replacing exact ages with age ranges) can help anonymize it, but overly generalized data may lose its value for research purposes [2]. A common mistake is assuming that removing IP addresses is enough. In reality, other identifiers, like browser fingerprints (e.g., screen resolution, operating system, browser version), can uniquely identify users in large populations [2].

Given these challenges, some organizations rely on Article 89's research exemptions. These allow personal data to be processed for research purposes under strict safeguards, which can be more practical than fully anonymizing highly detailed datasets [4]. Ultimately, the right approach depends on your specific needs, the level of data utility required, and acceptable risk levels. These complexities underscore the importance of robust anonymization techniques, which are explored further in the next sections.

Anonymization Techniques for Healthcare Data

When transferring healthcare data across borders, anonymization techniques can serve as crucial safeguards under GDPR. The choice of technique depends on how the data will be used - whether for analytics, research, or operational tasks. Let’s break down some of the most widely used methods.

k-Anonymity

k-Anonymity works by grouping individuals into equivalence classes, ensuring that no one can be uniquely identified within a dataset. For instance, instead of having data on a single 42-year-old female patient with diabetes in Boston, the dataset would include at least k individuals (commonly 5-10) sharing the same characteristics.

This method addresses GDPR’s concerns about re-identification risks, particularly for sensitive data like genetic or biometric information. To implement k-anonymity, healthcare organizations often use quasi-identifiers such as age ranges, geographic areas, and diagnosis categories to create these protective groups. While increasing the k value improves privacy, it can also limit the dataset’s usefulness for research. Striking the right balance ensures compliance with GDPR while maintaining data utility for international transfers.

"Adopt structured pseudonymization that prevents easy re-linkage; apply k-anonymity or differential privacy techniques for analytics where appropriate." – Kevin Henry, HIPAA Specialist, AccountableHQ [5]

Differential Privacy

Differential privacy involves adding controlled mathematical noise to data outputs. This ensures that the inclusion or exclusion of a single individual’s data doesn’t significantly affect the results, making it ideal for sharing statistical insights without revealing personal details.

The technique relies on an epsilon value to manage the balance between privacy and accuracy. Lower epsilon values (e.g., 0.1-1.0) offer stronger privacy by adding more noise, while higher values prioritize accuracy at the expense of privacy. Healthcare teams often use differential privacy when creating reports on treatment outcomes, disease prevalence, or population health trends for international research collaborations. This approach ensures GDPR compliance while enabling meaningful data sharing.

Generalization and Suppression

Generalization simplifies data by replacing specific values with broader categories. For example, exact ages might be converted into ranges (e.g., 20-30 years), precise locations into regions (e.g., Northeast U.S.), or detailed diagnoses into broader categories. Suppression, on the other hand, removes specific identifiers or outliers entirely.

These techniques align with GDPR’s data minimization principle, ensuring that only the necessary data for a given purpose is transferred across borders. For example, in a clinical trial dataset, ages might be generalized into 10-year ranges, and rare conditions that could lead to identification might be suppressed. Organizations can integrate these methods into ETL pipelines before transferring data. Direct identifiers can be tokenized, with mapping tables stored locally using "hold-your-own-key" models.

Risk Assessments for Anonymized Data

Even after data is anonymized, healthcare organizations must rigorously test it to ensure individuals can't be re-identified, as required by GDPR Article 25 and Recital 26. Misclassifying pseudonymized data as fully anonymized can lead to costly penalties - up to 4% of global annual revenue under Article 83. This risk is particularly high during cross-border data transfers, where external datasets from different jurisdictions may enable re-identification. Between 2020 and 2023, European Data Protection Board audits showed that 87% of healthcare datasets tested had vulnerabilities to re-identification. A February 2018 Vanderbilt study highlighted the issue when it successfully re-identified 87.9% of individuals using just date of birth, gender, and ZIP code. This discovery led to updates in de-identification standards, reducing similar breaches by 40% [6]. Below, we explore methods to measure and mitigate these risks.

Measuring Re-Identification Risks

Re-identification often happens through linkage attacks, where anonymized datasets are matched with external data - such as voter records or social media profiles. A 2019 study revealed that 95% of U.S. individuals could be re-identified from anonymized location data using just 15 characteristics. This poses serious risks for mobile health tracking and cross-border transfers of Protected Health Information (PHI) [7].

One way to evaluate these risks is through probabilistic inference testing. This method looks at quasi-identifiers - combinations like ZIP code, birth date, and diagnosis codes - and calculates the likelihood of identifying an individual. According to ENISA guidelines, a risk score above 0.01 indicates a high vulnerability, signaling the need for stronger anonymization before transferring data across borders [8].

Healthcare datasets are especially tricky due to their complexity. For example, rare disease codes combined with demographic data can significantly increase re-identification risks. Temporal patterns in treatment records add another layer of complexity. Genomic data is particularly sensitive - a 2018 Nature study found that genomic data with just 40 markers could uniquely identify 99.99% of Europeans, making cross-border transfers under frameworks like the EU–U.S. Data Privacy Framework even more challenging [9].

To measure these risks, techniques like uniqueness testing and Monte Carlo simulations are commonly used. Uniqueness testing identifies datasets where over 5% of records are unique, signaling a high risk. Tools like ARX and Amnesia help calculate these risks, while NIST guidelines recommend keeping re-identification risks below 1 in 1,000 for high-risk healthcare data transfers [10].

Reducing Re-Identification Risks

Once risks are quantified using methods like probabilistic inference and uniqueness testing, targeted strategies can be applied to reduce them. Some effective measures include:

- K-anonymity: Ensuring k ≥ 10 to make it harder to isolate individuals in a dataset.

- Differential privacy: Adding noise with ε ≤ 1.0 to obscure individual data points.

- Data swapping: Exchanging data values to further limit re-identification risks.

Regular audits are essential. Tools like IBM OpenPDS have been shown to reduce risks by 87% in clinical trial datasets, helping organizations maintain GDPR compliance during cross-border data transfers [11]. Best practices suggest performing quarterly risk assessments using automated tools such as SDCPrivacy software. A typical workflow includes a baseline risk scan, application of noise injection techniques, and follow-up validation testing. By 2023, 62% of EU healthcare organizations conducted quarterly audits - up from just 28% in 2019 [12].

Scenario-based simulations, particularly those incorporating cross-border auxiliary data, help organizations anticipate potential attack vectors. For example, platforms like Censinet RiskOps™ allow healthcare providers to benchmark anonymization practices, simulate re-identification scenarios, and detect vulnerabilities in EU–U.S. data transfers [13].

The stakes for inadequate assessments are high. In the 2015 Anthem breach, poor anonymization allowed probabilistic matching of 78.8 million records, leading to GDPR-equivalent fines of roughly €20M. A 2021 Health Affairs study emphasized the importance of mandatory pre-transfer risk scoring and using dynamic protocols. Hybrid techniques can play a critical role in ensuring compliance during cross-border data exchanges [14].

Implementing Anonymization for Cross-Border Transfers

To meet GDPR Article 25 requirements for securing patient data during international transfers, healthcare organizations need a structured anonymization process. This ensures compliance while safeguarding sensitive information in cross-border exchanges. A well-defined workflow is essential, as improper anonymization can lead to compliance failures and steep penalties.

Anonymization Process Steps

A robust anonymization process involves four main steps:

-

Step 1: Conduct a Data Inventory

Start by cataloging sensitive data. Identify both direct identifiers like patient names or medical record numbers and indirect ones, such as rare diagnoses combined with ZIP codes. For example, combining gender with a postal code could unintentionally expose identities. -

Step 2: Choose Appropriate Techniques

Select methods that balance privacy with data usability. For tabular data, k-anonymity (with k≥5) is effective, while genomic datasets often benefit from differential privacy (using ε≤1.0). These techniques help mask identities while preserving data utility. -

Step 3: Implement and Validate Techniques

After applying anonymization methods, validate their effectiveness. Run re-identification tests to confirm the risk of exposure remains below 1%. For instance, tools like ARX anonymization software can examine datasets (e.g., 10,000 records) against external sources to ensure no unique matches can be found. -

Step 4: Maintain Detailed Documentation

GDPR Article 25 mandates thorough records of anonymization efforts. Document methods, parameters (like k=10), risk metrics, and responsible personnel. Store these records in compliant systems for at least 10 years, as regulators may request them during audits or investigations.

Once these steps are completed, integrated risk management tools can help maintain compliance and streamline ongoing processes.

Anonymization and Risk Management Tools

Healthcare organizations are increasingly turning to platforms that combine anonymization workflows with risk management. One example is Censinet RiskOps™, which simplifies compliance by automating key tasks. This platform:

- Conducts risk assessments and tracks anonymization compliance across vendor networks.

- Validates anonymization techniques through collaborative audits.

- Generates automated reports that meet GDPR Article 25 requirements, cutting manual documentation efforts by up to 50%, as shown in real-world case studies.

Continuous monitoring is just as crucial as initial anonymization. Platforms like this offer real-time dashboards to track re-identification risks and flag potential issues. For example, if a vendor’s security score drops below an acceptable threshold, the system alerts teams to intervene before violations occur. This is especially critical for organizations managing complex supply chains, where data often flows through multiple vendors. Monitoring these "fourth-party" risks ensures compliance across all levels of the network.

Conclusion

GDPR-compliant anonymization has reshaped healthcare data transfers by ensuring patient information remains non-identifiable. This not only sidesteps strict transfer safeguards but also protects patient privacy while enabling data sharing for research and collaboration. The result? Improved operational efficiency and reduced risks.

The impact of anonymization goes far beyond meeting legal requirements. According to a 2023 ENISA report, EU pilot programs saw a 40% drop in re-identification risks after implementing anonymization techniques. Similarly, HIMSS Analytics reported in 2025 that organizations using integrated risk platforms reduced compliance violations by 55%. A practical example comes from Roche, which in Q1 2024 adopted GDPR-compliant anonymization for clinical trial data transfers to the U.S. Using methods like k-anonymity and differential privacy, they lowered re-identification risks from 15% to under 0.5%. This effort anonymized 2.5 million records, saved $1.2 million in compliance costs, and sped up research timelines by 25%.

To maintain these successes, continuous compliance is critical. Regular risk assessments - such as uniqueness testing and monitoring for new AI-driven linkage threats - are key. Tools like Censinet RiskOps™ simplify this process by automating risk scoring for anonymized data, conducting thorough third-party vendor assessments, and offering real-time monitoring across data pipelines. This comprehensive approach ensures the integrity of anonymization, safeguarding secure and compliant data transfers across borders.

FAQs

How can I prove our data is truly anonymized under GDPR?

To show that your data complies with GDPR anonymization standards, it's crucial to document the methods used to anonymize the data. This includes detailing the techniques and processes applied. Additionally, conducting risk assessments to evaluate re-identification risks is a key step. These assessments help identify any vulnerabilities that could lead to individuals being identified from the data.

Using established methods like k-Anonymity, Differential Privacy, or Expert Determination can further support your compliance efforts. These frameworks provide structured approaches to ensure the data cannot be traced back to specific individuals, helping you align with GDPR requirements.

When is pseudonymization enough for cross-border transfers?

Pseudonymization can be enough for cross-border data transfers if it effectively minimizes the risk of re-identifying individuals and is combined with appropriate safeguards. However, under GDPR, pseudonymized data is still subject to regulation because re-identification remains possible if someone gains access to the key. To stay compliant, it's crucial to implement robust safeguards that align with GDPR standards.

What’s the best way to balance data utility and re-identification risk?

To manage the trade-off between data usefulness and the risk of re-identification, rely on privacy-preserving techniques such as k-anonymity, differential privacy, or expert determination. These approaches help protect sensitive information while ensuring the data remains practical for analysis and informed decision-making.